Where in the World

Verifying the geographic location of outdoor images

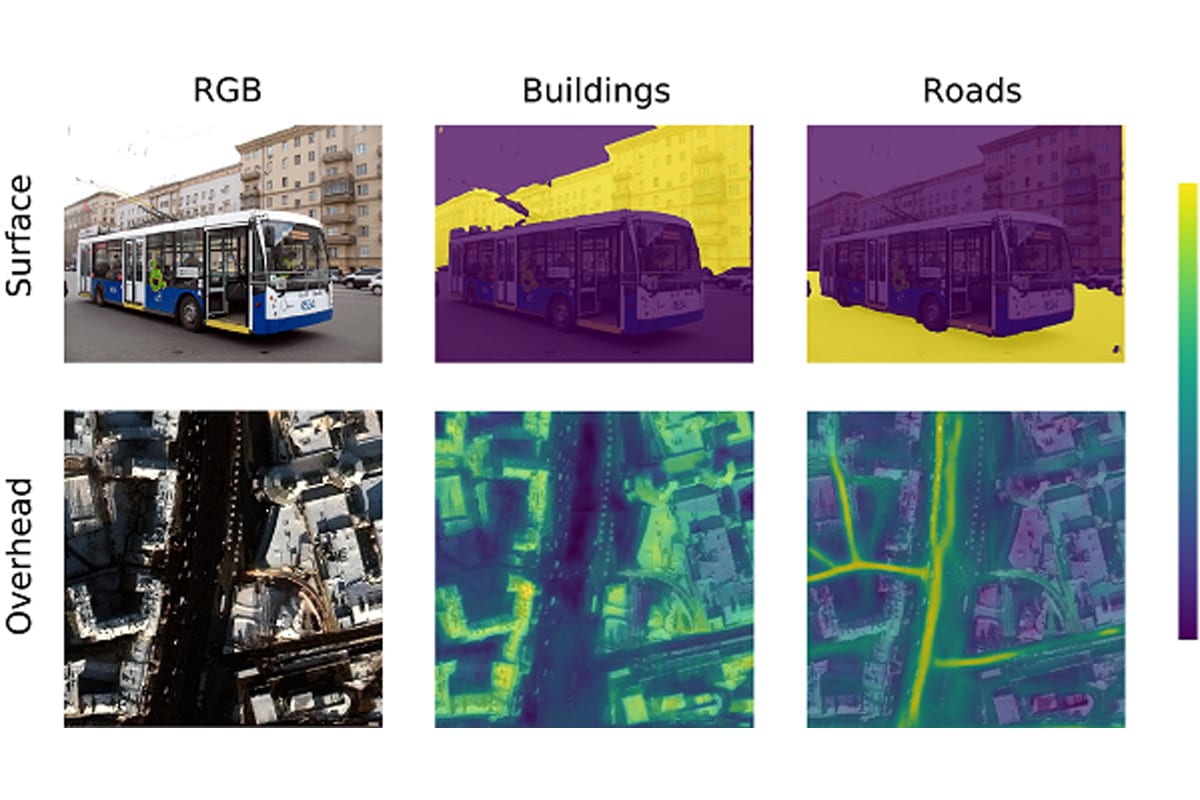

Suppose that a photograph has surfaced under dubious circumstances, raising the question of where it was really taken. One potential solution, cross-view image geolocalization (CVIG), is the process of geolocating an outdoor photograph by comparing it to satellite imagery of possible locations. Deep learning approaches to CVIG could ultimately provide a valuable tool for investigative journalists and others who need to assess the veracity of photograph-supported claims.

The Where in the World (WITW) project touched on each major component of CVIG deep learning. First, this effort produced a novel dataset for deep learning with CVIG. The dataset is unique in its use of real-world photographs drawn from urban environments on five continents. Furthermore, a CVIG deep learning model was developed incorporating state-of-the-art techniques. It was shown that geolocating real-world photographs is a much more difficult task than geolocating simple panoramas. With the model as a testbed, numerous algorithmic and data quality approaches were evaluated to see what improved model performance and what didn’t.

Further insights into CVIG model behavior were gleaned by developing a geospatial data visualization method, quantifying the effects of dataset characteristics, and assessing the impact of training dataset size. By using the WITW dataset in concert with the WITW model implementation, different aspects of model performance were analyzed to better understand the strengths and weaknesses of current CVIG capabilities.

Resources

Copyright © 2022 · IQT Labs LLC. All Rights Reserved.