- Blog, Data

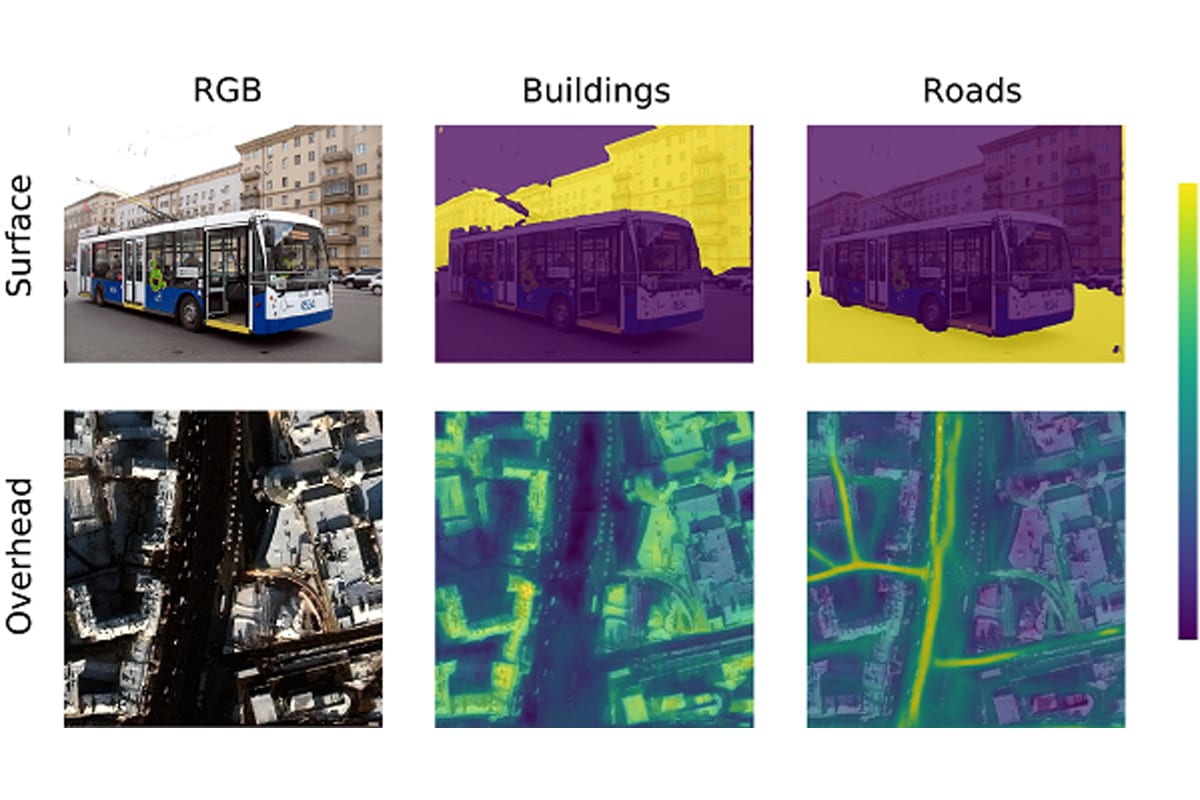

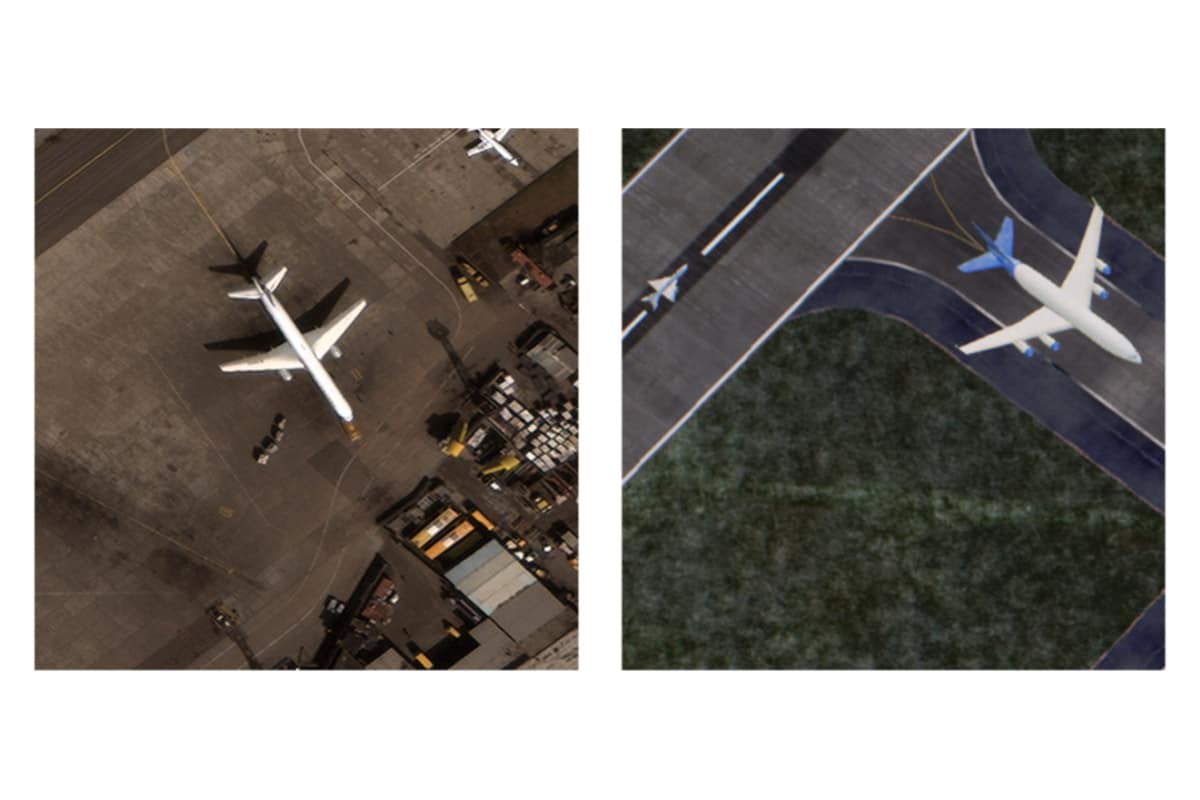

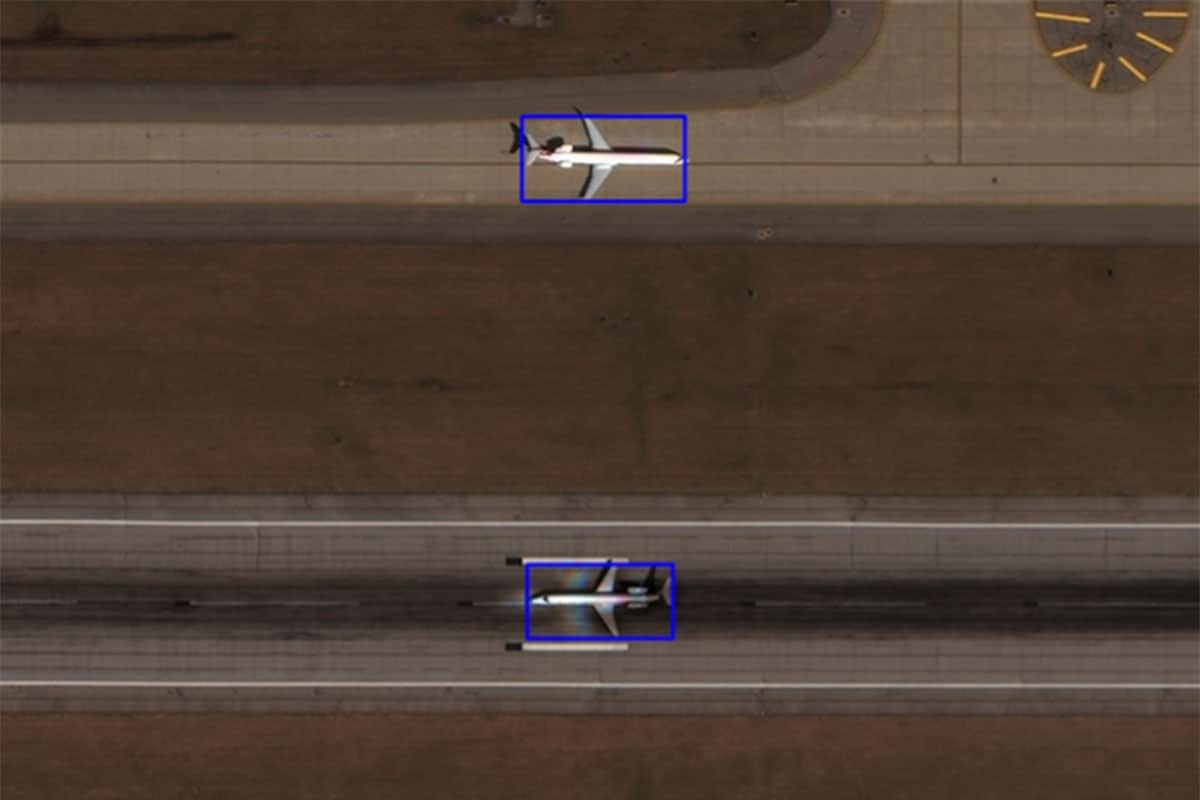

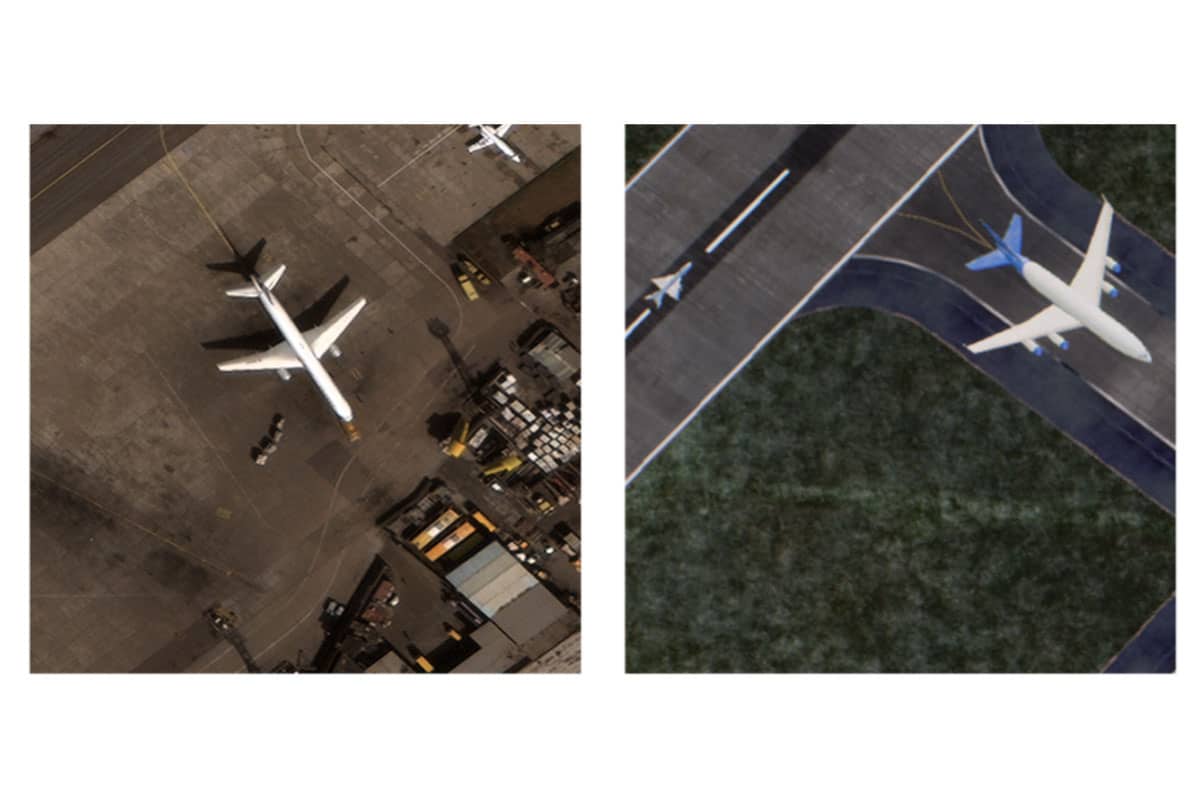

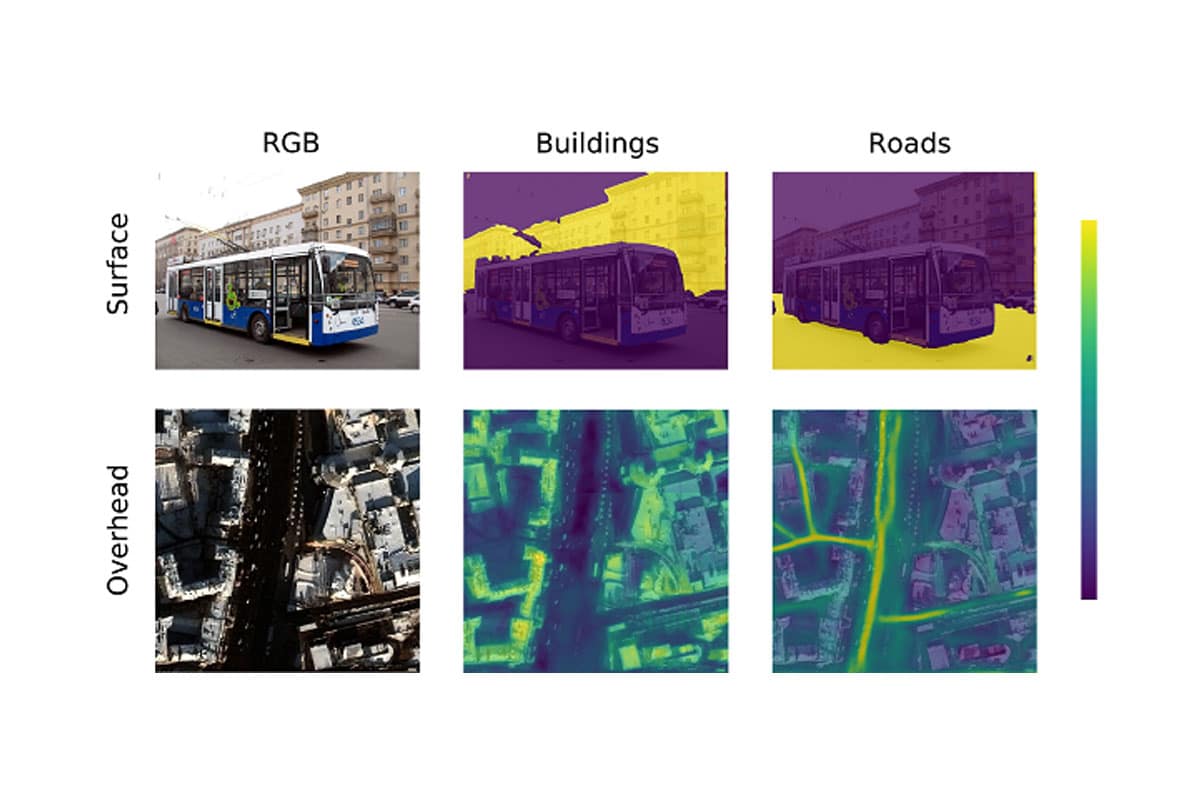

As the Russian invasion of Ukraine plays out before the world on social media, it’s critical to verify the places where the photos/videos were recorded. This blog explores our attempt to geolocate these social media photos by using satellite imagery and the power of deep learning.